Radley Balko is at it again. This time, the focus of his attention is a ruling is tool-mark analysis.

"This brings me to the September D.C. opinion of United States v. Marquette Tibbs, written by Associate Judge Todd E. Edelman. In this case, the prosecution wanted to put on a witness who would testify that the markings on a shell casing matched those of a gun discarded by a man who had been charged with murder. The witness planned to testify that after examining the marks on a casing under a microscope and comparing it with marks on casings fired by the gun in a lab, the shell casing was a match to the gun.

This sort of testimony has been allowed in thousands of cases in courtrooms all over the country. But this type of analysis is not science. It’s highly subjective. There is no way to calculate a margin for error. It involves little more than looking at the markings on one casing, comparing them with the markings on another and determining whether they’re a “match.” Like other fields of “pattern matching” analysis, such as bite-mark, tire-tread or carpet-fiber analysis, there are no statistics that analysts can produce to back up their testimony. We simply don’t know how many other guns could have created similar markings. Instead, the jury is simply asked to rely on the witness’s expertise about a match."

As noted in the previous post, the "pattern matching" comparisons are prone to error when an appropriate sample size is not used as a control.

The issue, as far as statistics are concerned, is not necessarily the observations of the analyst but the conclusions. Without an appropriate sample, how does one know where the observed results would fall within a normal distribution? Are the results one is observing "typical" or "unique?" How you would know? You would construct a valid test.

Balko's point? No one seems to be doing this - conducting valid tests. Well, almost no one. I certainly do - conduct valid tests, that is.

If you're interested in what I'm talking about and want to learn more about calculating sample sizes and comparing observed results, sign up today for Statistics for Forensic Analysts (link).

Have a great weekend, my friends.

This blog is no longer active and is maintained for archival purposes. It served as a resource and platform for sharing insights into forensic multimedia and digital forensics. Whilst the content remains accessible for historical reference, please note that methods, tools, and perspectives may have evolved since publication. For my current thoughts, writings, and projects, visit AutSide.Substack.com. Thank you for visiting and exploring this archive.

Featured Post

Welcome to the Forensic Multimedia Analysis blog (formerly the Forensic Photoshop blog). With the latest developments in the analysis of m...

Saturday, February 29, 2020

Tuesday, February 25, 2020

Sample Size? Who needs an appropriate sample?

Last year, I spent a lot of time talking about statistics and the need for analysts to understand this important science. Ive written a lot about the need for appropriate samples, especially around the idea of supporting a determination of "match" or "identification."

Many in the discipline have responded essentially saying, it is what it is - we don't really need to know about these topics or incorporate these concepts in our practice.

Now comes a new study from Sophie J. Nightingale and Hany Farid, Assessing the reliability of a clothing-based forensic identification. If you've been to one of my Content Analysis classes, or one of my Advanced Processing Techniques sessions, reading the new study won't yield much new information from a conceptual standpoint. It will, however, lend a bunch of new data affirming the need for appropriate samples and methods when conducting work in the forensic sciences.

From the new study: "Our justice system relies critically on the use of forensic science. More than a decade ago, a highly critical report raised significant concerns as to the reliability of many forensic techniques. These concerns persist today. Of particular concern to us is the use of photographic pattern analysis that attempts to identify an individual from purportedly distinct features. Such techniques have been used extensively in the courts over the past half century without, in our opinion, proper validation. We propose, therefore, that a large class of these forensic techniques should be subjected to rigorous analysis to determine their efficacy and appropriateness in the identification of individuals."

The important thing about the study is that the authors collected an appropriate set of samples to conduct their analysis.

Check it out and see what I mean. Notice how the results develop from the samples collected. See how they differ from an examination of a single image. Thus, I always say, under a certain sample size, you're better off flipping a coin.

If, after reading the paper, you're interested in increasing your knowledge of statistics and experimental science, feel free to sign-up for Statistics for Forensic Analysts.

Have a great day, my friends.

Many in the discipline have responded essentially saying, it is what it is - we don't really need to know about these topics or incorporate these concepts in our practice.

Now comes a new study from Sophie J. Nightingale and Hany Farid, Assessing the reliability of a clothing-based forensic identification. If you've been to one of my Content Analysis classes, or one of my Advanced Processing Techniques sessions, reading the new study won't yield much new information from a conceptual standpoint. It will, however, lend a bunch of new data affirming the need for appropriate samples and methods when conducting work in the forensic sciences.

From the new study: "Our justice system relies critically on the use of forensic science. More than a decade ago, a highly critical report raised significant concerns as to the reliability of many forensic techniques. These concerns persist today. Of particular concern to us is the use of photographic pattern analysis that attempts to identify an individual from purportedly distinct features. Such techniques have been used extensively in the courts over the past half century without, in our opinion, proper validation. We propose, therefore, that a large class of these forensic techniques should be subjected to rigorous analysis to determine their efficacy and appropriateness in the identification of individuals."

The important thing about the study is that the authors collected an appropriate set of samples to conduct their analysis.

Check it out and see what I mean. Notice how the results develop from the samples collected. See how they differ from an examination of a single image. Thus, I always say, under a certain sample size, you're better off flipping a coin.

If, after reading the paper, you're interested in increasing your knowledge of statistics and experimental science, feel free to sign-up for Statistics for Forensic Analysts.

Have a great day, my friends.

Thursday, January 30, 2020

Facial Comparison

FISWG's Facial Comparison Overview describes Morphological Analysis as "a method of facial comparison in which the features of the face are described, classified, and compared. Conclusions are based on subjective observations." ASTM's E3149 − 18, Standard Guide for Facial Image Comparison Feature List for Morphological Analysis, provides practitioners a 19-table guide of features to aid the examiner in the classification of features within images / videos of faces.

Why bring this up?

A fairly recent case in California highlights the need for practitioners to be not only aware of the consensus standards but to employ them in their work. In People v Hernandez (link), the unpublished appellate ruling describes the work of an analyst employing a novel technique which was excluded at the trial level.

"Upon review, we conclude [name omitted] proffered comparisons were based on matter of a type on which an expert may not reasonably rely, and they were speculative. The trial court acted well within its authority as a gatekeeper in essentially determining that [name omitted] was not employing the same level of intellectual rigor of an expert in the relevant field. Notably, the theories relied upon by [name omitted] were new to science as well as the law, and he did not establish that his theories had gained general acceptance in the relevant scientific community or were reliable."

Given FISWG's Facial Comparison Overview, there is a consensus on the general methods for Facial Comparison:

The analyst's choice?

"Asked how he would compare the images, [name omitted] explained he would use, in part, Euclidean geometry. He admitted this was a technique that other people did not use. Also, he used what he called Michelangelo theory — [name omitted]'s technique of taking away portions of a distorted and/or blurred digital image to reveal the true features of the person in the iPhone video still and Exhibit 8—and an unnamed and unexplained technique for looking at bad images. [name omitted] thought his margin of error was five-to-eight percent."

"On cross-examination, [name omitted] agreed he was "somewhat unique" in using Euclidean geometry in image analysis and comparison. He did not have a scientific degree or a degree in Euclidean geometry. When asked if his use of Euclidean geometry had been subjected to scientific and peer view, he stated, "Sometimes, but not in this case because it's a theorem to understand my logic. I'm not drawing lines. . . . [¶] . . . [¶] I'm using a theory. . . . I'm defending my logic with a theory in geometry[.]" On an as-needed basis, [name omitted] used a member of his staff for peer review. The prosecutor inquired if [name omitted] was aware of anyone using Euclidean geometry in the forensic analysis of photographs like him, and he replied, "By name? No."

"[name omitted] was asked if he had made any effort "to distinguish between artifacts and properties of the individuals depicted" in Exhibit 8. He replied, "No. Not in the report." He was then asked if he tried to make a distinction in his analysis. He said, "As best as . . . one could possibly do, but there's quite a bit of pixilation on that image."

In California, there's a lot of precedent on the admission of expert testimony. This case cites Sargon v USC (link) in addressing the issue of the appropriateness of the Trial Court's exclusion of the analyst's testimony and work.

"[In California,] the gatekeeper's role `is to make certain that an expert, whether basing testimony upon professional studies or personal experience, employs in the courtroom the same level of intellectual rigor that characterizes the practice of an expert in the relevant field.' [Citation.]" (Sargon, supra, 55 Cal.4th at p. 772.)"

"Based on the foregoing, we conclude [name omitted]'s comparisons were properly excluded. His method was full of theories and assumptions, and he ignored some or all of the artifacts at different points. Simply put, his opinion was not based on matter of a type on which an expert may reasonably rely. Beyond that, because [name omitted] essentially confused artifacts for features, his opinion was speculative."

Why bring this up?

A fairly recent case in California highlights the need for practitioners to be not only aware of the consensus standards but to employ them in their work. In People v Hernandez (link), the unpublished appellate ruling describes the work of an analyst employing a novel technique which was excluded at the trial level.

"Upon review, we conclude [name omitted] proffered comparisons were based on matter of a type on which an expert may not reasonably rely, and they were speculative. The trial court acted well within its authority as a gatekeeper in essentially determining that [name omitted] was not employing the same level of intellectual rigor of an expert in the relevant field. Notably, the theories relied upon by [name omitted] were new to science as well as the law, and he did not establish that his theories had gained general acceptance in the relevant scientific community or were reliable."

Given FISWG's Facial Comparison Overview, there is a consensus on the general methods for Facial Comparison:

- Holistic Comparison

- Morphological Analysis

- Photo-anthropometry

- Superimposition

The analyst's choice?

"Asked how he would compare the images, [name omitted] explained he would use, in part, Euclidean geometry. He admitted this was a technique that other people did not use. Also, he used what he called Michelangelo theory — [name omitted]'s technique of taking away portions of a distorted and/or blurred digital image to reveal the true features of the person in the iPhone video still and Exhibit 8—and an unnamed and unexplained technique for looking at bad images. [name omitted] thought his margin of error was five-to-eight percent."

"On cross-examination, [name omitted] agreed he was "somewhat unique" in using Euclidean geometry in image analysis and comparison. He did not have a scientific degree or a degree in Euclidean geometry. When asked if his use of Euclidean geometry had been subjected to scientific and peer view, he stated, "Sometimes, but not in this case because it's a theorem to understand my logic. I'm not drawing lines. . . . [¶] . . . [¶] I'm using a theory. . . . I'm defending my logic with a theory in geometry[.]" On an as-needed basis, [name omitted] used a member of his staff for peer review. The prosecutor inquired if [name omitted] was aware of anyone using Euclidean geometry in the forensic analysis of photographs like him, and he replied, "By name? No."

"[name omitted] was asked if he had made any effort "to distinguish between artifacts and properties of the individuals depicted" in Exhibit 8. He replied, "No. Not in the report." He was then asked if he tried to make a distinction in his analysis. He said, "As best as . . . one could possibly do, but there's quite a bit of pixilation on that image."

In California, there's a lot of precedent on the admission of expert testimony. This case cites Sargon v USC (link) in addressing the issue of the appropriateness of the Trial Court's exclusion of the analyst's testimony and work.

"[In California,] the gatekeeper's role `is to make certain that an expert, whether basing testimony upon professional studies or personal experience, employs in the courtroom the same level of intellectual rigor that characterizes the practice of an expert in the relevant field.' [Citation.]" (Sargon, supra, 55 Cal.4th at p. 772.)"

"Based on the foregoing, we conclude [name omitted]'s comparisons were properly excluded. His method was full of theories and assumptions, and he ignored some or all of the artifacts at different points. Simply put, his opinion was not based on matter of a type on which an expert may reasonably rely. Beyond that, because [name omitted] essentially confused artifacts for features, his opinion was speculative."

The ruling goes on to note relevant cases to support the conclusion that the exclusion was appropriate. As such, it's a good reference.

But to the point, why make up a new technique when there's plenty of guidance out there regarding photographic comparison / facial comparison? For Morphological Analysis, you can easily translate the ASTM's guide into a spreadsheet that can be used to document features and locations. Yes, all of the current methods necessarily result from subjective observations. Likewise, conclusions are based upon those observations and as such, should be adequately supported.

If you're involved in a case where photographic comparison / facial comparison is at issue, feel free to contact me regarding a review of the evidence and/or the work done previously, or by opposing counsel's analyst. It's important to note that giving one's work to "a member of [one's] staff for peer review" is not actually a "peer review," it's a technical review. If you'd like an actual peer review, contact me today.

Likewise, if you want to learn this amazing discipline, we regularly feature classes on photographic comparison in Henderson, NV. We can also bring the class to you.

Have a great day, my friends.

Thursday, January 23, 2020

What is Super Resolution?

Back in early 2017, I wrote an article for Axon in support of their now-dissolved partnership with my former employer about Super Resolution. It seems that Super Resolution is back in the news. By way of updating that post, let's revisit just what's going on with the technology and the few problems it may cause if you don't understand what's happening.

Vendor reps note that Super Resolution works at the "sub-pixel" level, and people's eyes roll. If the pixel is the smallest unit of measure, a single picture element, how can there be a "sub-pixel?" That's a very good question. Let's take a look at the answer.

Ok. What is sub-pixel registration?

First, let's look at how the authors of Super-Resolution Without Explicit Subpixel Motion Estimation set up the premise: "The coefficients of this series are estimated by solving a local weighted least-squares problem, where the weights are a function of the 3-D space-time orientation in the neighborhood. As this framework is fundamentally based upon the comparison of neighboring pixels in both space and time, it implicitly contains information about the local motion of the pixels across time, therefore rendering unnecessary an explicit computation of motions of modest size. The proposed approach not only significantly widens the applicability of super-resolution methods to a broad variety of video sequences containing complex motions, but also yields improved overall performance." That's quite a mouthful.

Here's the breakdown.

The first thing we must understand is the pixel neighborhood. The neighbourhood of a pixel is the collection of pixels which surround it. The connected pixels are neighbors to every pixel that touches one of their corners.

Next, we must understand what registration means. Image registration is the process of aligning two or more images of the same scene. This process involves designating one image as the reference (also called the reference image or the fixed image), and applying geometric transformations to the other images so that they align with the reference.

Let's put it together. A static pixel (P) in a single image is easy to understand. But, what about video? That pixel represents some place in 4D space-time. That 4D space-time orientation will change as time progresses. We want to line-up (register) that pixel across the multiple frames. Super Resolution thus tracks implicit information about the motion of the pixel across 4D space-time, and corrects for that motion. The result of the process is a single higher-resolution image.

The practical implications are this:

But, when the motion is subtle, super resolution is a better choice.

Here's an example. The park service was investigating a vandalism and poaching incident. There's a video that they believe was taken in the area of the incident. Within the video, there's a sign in the background that contains location information (text) that's blurred by the motion of the shaking, hand-held camera. There's enough motion to eliminate Frame Average as a processing choice. There's not enough motion to use a perspective registration function to align the sign correctly. Super resolution is the best choice to correct for the motion blur and register the pixels that make the text of the sign.

In this case, super resolution was indeed the best choice. The sign's information was revealed and the location was determined.

And now the potential pitfalls ...

Vendor reps note that Super Resolution works at the "sub-pixel" level, and people's eyes roll. If the pixel is the smallest unit of measure, a single picture element, how can there be a "sub-pixel?" That's a very good question. Let's take a look at the answer.

From the report in Amped SRL's Five: The Super Resolution filter applies a sub-pixel registration to all the frames of a video, then merges the motion corrected frames together, along with a deblurring filtering. If a Selection is set, then the selected area will be optimized.

Ok. What is sub-pixel registration?

First, let's look at how the authors of Super-Resolution Without Explicit Subpixel Motion Estimation set up the premise: "The coefficients of this series are estimated by solving a local weighted least-squares problem, where the weights are a function of the 3-D space-time orientation in the neighborhood. As this framework is fundamentally based upon the comparison of neighboring pixels in both space and time, it implicitly contains information about the local motion of the pixels across time, therefore rendering unnecessary an explicit computation of motions of modest size. The proposed approach not only significantly widens the applicability of super-resolution methods to a broad variety of video sequences containing complex motions, but also yields improved overall performance." That's quite a mouthful.

Here's the breakdown.

The first thing we must understand is the pixel neighborhood. The neighbourhood of a pixel is the collection of pixels which surround it. The connected pixels are neighbors to every pixel that touches one of their corners.

Next, we must understand what registration means. Image registration is the process of aligning two or more images of the same scene. This process involves designating one image as the reference (also called the reference image or the fixed image), and applying geometric transformations to the other images so that they align with the reference.

Let's put it together. A static pixel (P) in a single image is easy to understand. But, what about video? That pixel represents some place in 4D space-time. That 4D space-time orientation will change as time progresses. We want to line-up (register) that pixel across the multiple frames. Super Resolution thus tracks implicit information about the motion of the pixel across 4D space-time, and corrects for that motion. The result of the process is a single higher-resolution image.

The practical implications are this:

- Frame Averaging works well when the object of interest doesn't move. The frames are averaged and the things that are different across frames are removed and the things that are the same remain.

- To help with a Frame Averaging exercise, we can use a perspective registration process to align the item of interest - a license plate for example - across frames. This works well when the item has moved to an entirely new location, like in low frame rate video.

But, when the motion is subtle, super resolution is a better choice.

Here's an example. The park service was investigating a vandalism and poaching incident. There's a video that they believe was taken in the area of the incident. Within the video, there's a sign in the background that contains location information (text) that's blurred by the motion of the shaking, hand-held camera. There's enough motion to eliminate Frame Average as a processing choice. There's not enough motion to use a perspective registration function to align the sign correctly. Super resolution is the best choice to correct for the motion blur and register the pixels that make the text of the sign.

In this case, super resolution was indeed the best choice. The sign's information was revealed and the location was determined.

And now the potential pitfalls ...

- Brand new pixels and pixel neighborhoods are created in this process.

- A brand new piece of evidence (demonstrative) is created in this process.

Whenever you perform a perspective registration, your geometric transform necessarily creates new pixels and neighborhoods. In FIVE, during the process of using the filters, the creation is "virtual" in that it all happens in CPU and RAM. These new pixels and neighborhoods are really only created when you write the results of your processing out as a new file.

That brand new piece of evidence that you've created - the results written out - is a demonstrative that you've just created. You must explain it's relationship to the actual evidence files and how it came to be. Indeed, you've just added a new file to the case. This fact should be disclosed in your report.

With the reports in FIVE, there is the plain English statement about the process that is lifted from the many academic papers from which Amped SRL gets their filters. Sure, when you're asked about the process performed, you can likely just read the report's description. But, what if the Trier of Fact wants to know more? How confident are you that you can explain super resolution?

Consider super resolution's main use - license plate enhancement. Your derivative file is a demonstrative in support on one side's theory of the case. Your derivative is illustrative of your opinion. Did you use the tool correctly? Are the results accurate? Is seeing believing? Given the ultra low resolutions we're usually dealing with, a slight shift in pixels can make a big difference in rendering alpha-numeric characters. This is part of the reason Amped SRL likes to use European license plates in their classes and PR - they're easy to fix. Not so in the US.

Advice like that shown above is the value of independence. A manufacturer's rep can really only show you the features. I'll show you not only how a tool works, but how to use it in different contexts, why it's sometimes inappropriate to use, and how to frame it's use during testimony. If you're interested in diving deep into the discipline of video forensics, I invite you to an upcoming course. See our offerings on our web site.

Have a great day, my friends.

Tuesday, January 7, 2020

The FTC vs Axon? Axon vs the FCT? Wow!

Whilst we were all minding our own business, it seems that the US Federal Trade Commission was busy investigating Axon for anti-competitive behavior. Last Friday, Axon CEO, Rick Smith, penned a piece on LinkedIn to make his case to the public. According to Smith, the FTC believes that Axon's acquisition of VieVu in 2018 was anti-competitive.

I'm not a fly on the wall. I only know what I've read in Smith's post and the subsequent reporting and interviews. To be fair, the press has let Smith tell Axon's side of the story. For the government's side, we have only a press release on the FTC's web site.

In terms of disclosure, it should be noted that within the scope of my prior employment with Amped Software, Inc. (an Axon partner), I worked closely with several internal business units within Axon.

But, I want to break down the FTC's press release to attempt to determine what's really the problem here.

Paragraph 1: "The Federal Trade Commission has issued an administrative complaint (a public version of which will be available and linked to this news release as soon as possible) challenging Axon Enterprise, Inc.’s consummated acquisition of its body-worn camera systems competitor VieVu, LLC. Before the acquisition, the two companies competed to provide body-worn camera systems to large, metropolitan police departments across the United States."

Analysis: Yes, they were in the same market. But, given the many quality issues with VieVu's product line, they weren't really competing - in the same way that the best Texas high school football team is really no competition for the worst of the NFL in any given year. My opinion on how VieVu got "competitive" on deals was to compete on price, not on quality. It was their low price that got them in the door at police departments, but it was their lack of quality that ruined the company.

Paragraph 2: "According to the complaint, Axon’s May 2018 acquisition reduced competition in an already concentrated market. Before their merger, Axon and VieVu competed to sell body-worn camera systems that were particularly well suited for large metropolitan police departments. Competition between Axon and VieVu resulted in substantially lower prices for large metropolitan police departments, the complaint states. Axon and VieVu also competed vigorously on non-price aspects of body-worn camera systems. By eliminating direct and substantial competition in price and innovation between dominant supplier Axon and its closest competitor, VieVu, to serve large metropolitan police departments, the merger removed VieVu as a bidder for new contracts and allowed Axon to impose substantial price increases, according to the complaint."

Analysis: Given the analysis of the first paragraph, VieVu was certainly not "particularly well suited" to deliver on any department's needs. Additionally, it wasn't the "competition" that drove prices down, it was VieVu's essentially offering their goods below cost to get in the door that drove prices down. Selling below cost isn't sustainable, and police agencies must look at all factors of a vendor - like the fact that unsustainable business practices will likely mean that the company won't be around throughout the lifecycle of the product.

Paragraph 3: “Competition not only keeps prices down, but it drives innovation that makes products better,” said Ian Conner, Director of the FTC’s Bureau of Competition. “Here, the stakes could not be higher. The Commission is taking action to ensure that police officers have access to the cutting-edge products they need to do their job, and police departments benefit from the lower prices and innovative products that competition had provided before the acquisition.”

Analysis: the market is still chocked full of offerings. There's Motorola/Watchguard, Panasonic, Getac, Utility, Coban, Visual Labs/Samsung, L3/Mobile Vision, and Digital Ally, plus over 10k results from China on alibaba.com. You can get a body camera from China's LS Vision for under $100/unit. That's a lot of competition.

Paragraph 4: "The complaint also states that as part of the merger agreement, Axon entered into several long-term ancillary agreements with VieVu’s former parent company, Safariland, that also substantially lessened actual and potential competition. These agreements barred Safariland from competing with Axon now and in the future on all of Axon’s products, limited solicitation of customers and employees by either company, and stifled potential innovation or expansion by Safariland. These restraints, some of which were intended to last more than a decade, are not reasonably limited to protect a legitimate business interest, according to the complaint."

Analysis: This part is just silly. Axon says to Safariland, known for their holsters and gear, stay with what you're good at (holsters and gear) and we'll stay with what we're good at. Stay out of our lane, and we'll stay out of yours. This is a good business decision, not anti-competitive behavior. You also have to be myopic to not consider that Safariland only bought VieVu in 2015. According to the WSJ, "Vievu LLC, a maker of police body cameras, has been acquired by Safariland LLC, which is bulking up its portfolio of security products ahead of a planned initial public offering next year." Safariland's entry into other vertical markets followed a similar pattern. But, at their heart, they're a holster and gear company, so their exit from the technology sector is no great loss.

Paragraph 5: "The Commission vote to issue the administrative complaint was 5-0. The administrative trial is scheduled to begin on May 19, 2020."

Analysis: What is missing is a specific citation as to which federal laws were violated. Likely, there was no specific violation of US law, but rather a violation of an FTC Rule. The FTC has the authority to pass and enforce it's own rules outside of the normal US law making process. Smith outlines the administrative hearing process in his op-ed. Smith is correct, this won't see a "court room," as the vast majority of FTC processes are kept in-house.

An examination of the FTC's "Competition Enforcement Database" found only 25 competition enforcement actions for 2018, which was down from 2017's 32 actions. Given the totality of commercial activity in the US, this is an incredibly small number. The assumption is that they only go after the most egregious of behaviors. If that's the case, what's really behind this action against Axon. VieVue was delivery faulty products. It was losing deals on it's own. Axon did a mutually beneficial deal with Safariland to take VieVu off their hands. What's actually wrong with this? Does this rise to a Standard Oil or AT&T level? Hardly. So why this case? That's the problem with administrative processes, we'll never know. There's a complete lack of transparency into their decision making or procedures.

I do tend to agree with Smith that this issue rises above brands and technology. It's a peek into the workings of the Administrative State in the US. What remains to be seen is if the US government grants Axon permission to sue the FTC. Stay tuned.

I'm not a fly on the wall. I only know what I've read in Smith's post and the subsequent reporting and interviews. To be fair, the press has let Smith tell Axon's side of the story. For the government's side, we have only a press release on the FTC's web site.

In terms of disclosure, it should be noted that within the scope of my prior employment with Amped Software, Inc. (an Axon partner), I worked closely with several internal business units within Axon.

But, I want to break down the FTC's press release to attempt to determine what's really the problem here.

Paragraph 1: "The Federal Trade Commission has issued an administrative complaint (a public version of which will be available and linked to this news release as soon as possible) challenging Axon Enterprise, Inc.’s consummated acquisition of its body-worn camera systems competitor VieVu, LLC. Before the acquisition, the two companies competed to provide body-worn camera systems to large, metropolitan police departments across the United States."

Analysis: Yes, they were in the same market. But, given the many quality issues with VieVu's product line, they weren't really competing - in the same way that the best Texas high school football team is really no competition for the worst of the NFL in any given year. My opinion on how VieVu got "competitive" on deals was to compete on price, not on quality. It was their low price that got them in the door at police departments, but it was their lack of quality that ruined the company.

Paragraph 2: "According to the complaint, Axon’s May 2018 acquisition reduced competition in an already concentrated market. Before their merger, Axon and VieVu competed to sell body-worn camera systems that were particularly well suited for large metropolitan police departments. Competition between Axon and VieVu resulted in substantially lower prices for large metropolitan police departments, the complaint states. Axon and VieVu also competed vigorously on non-price aspects of body-worn camera systems. By eliminating direct and substantial competition in price and innovation between dominant supplier Axon and its closest competitor, VieVu, to serve large metropolitan police departments, the merger removed VieVu as a bidder for new contracts and allowed Axon to impose substantial price increases, according to the complaint."

Analysis: Given the analysis of the first paragraph, VieVu was certainly not "particularly well suited" to deliver on any department's needs. Additionally, it wasn't the "competition" that drove prices down, it was VieVu's essentially offering their goods below cost to get in the door that drove prices down. Selling below cost isn't sustainable, and police agencies must look at all factors of a vendor - like the fact that unsustainable business practices will likely mean that the company won't be around throughout the lifecycle of the product.

Paragraph 3: “Competition not only keeps prices down, but it drives innovation that makes products better,” said Ian Conner, Director of the FTC’s Bureau of Competition. “Here, the stakes could not be higher. The Commission is taking action to ensure that police officers have access to the cutting-edge products they need to do their job, and police departments benefit from the lower prices and innovative products that competition had provided before the acquisition.”

Analysis: the market is still chocked full of offerings. There's Motorola/Watchguard, Panasonic, Getac, Utility, Coban, Visual Labs/Samsung, L3/Mobile Vision, and Digital Ally, plus over 10k results from China on alibaba.com. You can get a body camera from China's LS Vision for under $100/unit. That's a lot of competition.

Paragraph 4: "The complaint also states that as part of the merger agreement, Axon entered into several long-term ancillary agreements with VieVu’s former parent company, Safariland, that also substantially lessened actual and potential competition. These agreements barred Safariland from competing with Axon now and in the future on all of Axon’s products, limited solicitation of customers and employees by either company, and stifled potential innovation or expansion by Safariland. These restraints, some of which were intended to last more than a decade, are not reasonably limited to protect a legitimate business interest, according to the complaint."

Analysis: This part is just silly. Axon says to Safariland, known for their holsters and gear, stay with what you're good at (holsters and gear) and we'll stay with what we're good at. Stay out of our lane, and we'll stay out of yours. This is a good business decision, not anti-competitive behavior. You also have to be myopic to not consider that Safariland only bought VieVu in 2015. According to the WSJ, "Vievu LLC, a maker of police body cameras, has been acquired by Safariland LLC, which is bulking up its portfolio of security products ahead of a planned initial public offering next year." Safariland's entry into other vertical markets followed a similar pattern. But, at their heart, they're a holster and gear company, so their exit from the technology sector is no great loss.

Paragraph 5: "The Commission vote to issue the administrative complaint was 5-0. The administrative trial is scheduled to begin on May 19, 2020."

Analysis: What is missing is a specific citation as to which federal laws were violated. Likely, there was no specific violation of US law, but rather a violation of an FTC Rule. The FTC has the authority to pass and enforce it's own rules outside of the normal US law making process. Smith outlines the administrative hearing process in his op-ed. Smith is correct, this won't see a "court room," as the vast majority of FTC processes are kept in-house.

An examination of the FTC's "Competition Enforcement Database" found only 25 competition enforcement actions for 2018, which was down from 2017's 32 actions. Given the totality of commercial activity in the US, this is an incredibly small number. The assumption is that they only go after the most egregious of behaviors. If that's the case, what's really behind this action against Axon. VieVue was delivery faulty products. It was losing deals on it's own. Axon did a mutually beneficial deal with Safariland to take VieVu off their hands. What's actually wrong with this? Does this rise to a Standard Oil or AT&T level? Hardly. So why this case? That's the problem with administrative processes, we'll never know. There's a complete lack of transparency into their decision making or procedures.

I do tend to agree with Smith that this issue rises above brands and technology. It's a peek into the workings of the Administrative State in the US. What remains to be seen is if the US government grants Axon permission to sue the FTC. Stay tuned.

Monday, December 30, 2019

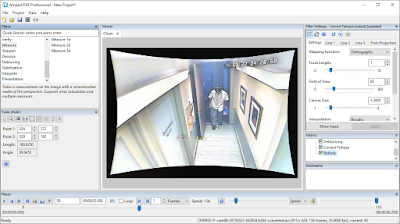

An Amped FIVE UX tip to wrap up the year

The recent update to Amped SRL's flagship tool, FIVE, brought some UX headaches for many US-based users. You see, the redesigned reporting function does a something new and unexpected. Let's take a look, and offer a couple of work-arounds.

Let's say you're used to your Evidence.com work flow, the one where all your evidence goes into a single folder for upload, and you're processing files for a multi-defendant case. If there are files featuring only one of the defendants, which happens often, you'll want to have separate project (.afp) files for each evidence item. This will make tagging easier. This will make discovery easier. This will make the new reporting functionality become non-functional.

You see, the new reporting feature doesn't just create a report, it creates a folder to hold the report and the Amped SRL logos - and calls the folder "report." That's fine for the first file that you process. But the next project? Well, when you go to generate the report, FIVE will see that there's a "report" folder there already. What does it do? Does it prompt you to say, "hey! there's already a folder with that name. What do you want to do?" Of course not. Not expecting a new reporting behavior, and a complete lack of documentation of this new reporting format, you'll just keep processing away. At the end of your work, there's only one folder and only one report file.

The work-around on your desktop is to put each evidence item into it's own folder, within the case folder. It's an extra step, I know. You'll also have to modify the "inbox" that E.com is looking for.

The other weird issue is that FIVE now drops some logos and a banner as loose files in the report folder. I'm sure that this is due to FIVE's processing of the report - first to HTML - then to PDF via some freeware. One would think that in choosing PDF you wouldn't receive the side-car files, but you would be thinking wrong. Again, this has to do with the way the bit of freeware processes the report.

As an interesting aside, in Daubert Hearing, I actually got a cross examination question that hinted at Amped SRL being pirates of software. Is anything in the product an original creation or is it just pieced together bits and such? But, I digress.

Remember, in the US, anything created in the process of analysis should be preserved and disclosed. One customer complained that it seems as though Amped SRL is throwing an extra business card in on the case file. I don't know about that. But, it does seem a bit odd for a forensic science tool to behave in such a way.

You can always revert to the previous version if you want to save time and preserve your sanity. This new version doesn't add much for the analyst anyway. You can easily install previous versions. It takes only a few minutes each time.

As with anything forensic science, always validate new releases of your favorite tools prior to deploying them on casework. If you're looking for an independent set of eyes, and would like to outsource your tool validation, we're here to help.

Have a great new year my friends.

Let's say you're used to your Evidence.com work flow, the one where all your evidence goes into a single folder for upload, and you're processing files for a multi-defendant case. If there are files featuring only one of the defendants, which happens often, you'll want to have separate project (.afp) files for each evidence item. This will make tagging easier. This will make discovery easier. This will make the new reporting functionality become non-functional.

You see, the new reporting feature doesn't just create a report, it creates a folder to hold the report and the Amped SRL logos - and calls the folder "report." That's fine for the first file that you process. But the next project? Well, when you go to generate the report, FIVE will see that there's a "report" folder there already. What does it do? Does it prompt you to say, "hey! there's already a folder with that name. What do you want to do?" Of course not. Not expecting a new reporting behavior, and a complete lack of documentation of this new reporting format, you'll just keep processing away. At the end of your work, there's only one folder and only one report file.

The work-around on your desktop is to put each evidence item into it's own folder, within the case folder. It's an extra step, I know. You'll also have to modify the "inbox" that E.com is looking for.

The other weird issue is that FIVE now drops some logos and a banner as loose files in the report folder. I'm sure that this is due to FIVE's processing of the report - first to HTML - then to PDF via some freeware. One would think that in choosing PDF you wouldn't receive the side-car files, but you would be thinking wrong. Again, this has to do with the way the bit of freeware processes the report.

As an interesting aside, in Daubert Hearing, I actually got a cross examination question that hinted at Amped SRL being pirates of software. Is anything in the product an original creation or is it just pieced together bits and such? But, I digress.

Remember, in the US, anything created in the process of analysis should be preserved and disclosed. One customer complained that it seems as though Amped SRL is throwing an extra business card in on the case file. I don't know about that. But, it does seem a bit odd for a forensic science tool to behave in such a way.

You can always revert to the previous version if you want to save time and preserve your sanity. This new version doesn't add much for the analyst anyway. You can easily install previous versions. It takes only a few minutes each time.

As with anything forensic science, always validate new releases of your favorite tools prior to deploying them on casework. If you're looking for an independent set of eyes, and would like to outsource your tool validation, we're here to help.

Have a great new year my friends.

Sunday, December 22, 2019

Human Anatomy & Physiology

In my private practice, I often review the case work of others. This "technical review" or validation is provided for those labs that either require an outside set of eyes by policy / statute, or for those small labs and sole-proprietors who will occasionally require these services.

Recently, I was reviewing a case package related to a photographic comparison of "known" and "unknown" facial images. In reviewing the report, it was clear that the examiner had no training or education in basic human anatomy & physiology. Their terminology was generic and confusing, avoiding specific names for specific features. It made it really hard to follow their train of though, which made it hard to understand the basis for their conclusion.

Many in police service either lack a college degree, or have a degree in criminal justice / administration of justice. These situations mean that they won't have had a specific, college level anatomy / physiology course. Or, it may be that, like me, your anatomy course was so long ago that you find yourself needing a refresher.

I got my refresher via a certificate program from the University of Michigan, on-line. But, if you don't have the time or patience to work through the weekly lessons, or if you're looking for a lower cost option, I've found an interesting alternative.

In essence, I've found a test-prep course that's designed to help doctors and medical professionals learn what they're supposed to know, and help them pass their tests. It's reasonably priced and gives you the whole program on your chosen platform.

The Human Anatomy & Physiology Course by Dr. James Ross (click here) even comes with illustrations that you could incorporate in your reports. Check it out for yourself.

You don't necessarily have to have training / education in anatomy to opine on a photographic comparison. Not all jurisdictions will care. But, it really ups your reporting game if you know what the different pieces and parts are called, and if you use professional looking graphics in your report.

If you need help or advice, please feel free to ask. I'm here to help.

Have a great day my friends.

Recently, I was reviewing a case package related to a photographic comparison of "known" and "unknown" facial images. In reviewing the report, it was clear that the examiner had no training or education in basic human anatomy & physiology. Their terminology was generic and confusing, avoiding specific names for specific features. It made it really hard to follow their train of though, which made it hard to understand the basis for their conclusion.

Many in police service either lack a college degree, or have a degree in criminal justice / administration of justice. These situations mean that they won't have had a specific, college level anatomy / physiology course. Or, it may be that, like me, your anatomy course was so long ago that you find yourself needing a refresher.

I got my refresher via a certificate program from the University of Michigan, on-line. But, if you don't have the time or patience to work through the weekly lessons, or if you're looking for a lower cost option, I've found an interesting alternative.

In essence, I've found a test-prep course that's designed to help doctors and medical professionals learn what they're supposed to know, and help them pass their tests. It's reasonably priced and gives you the whole program on your chosen platform.

The Human Anatomy & Physiology Course by Dr. James Ross (click here) even comes with illustrations that you could incorporate in your reports. Check it out for yourself.

You don't necessarily have to have training / education in anatomy to opine on a photographic comparison. Not all jurisdictions will care. But, it really ups your reporting game if you know what the different pieces and parts are called, and if you use professional looking graphics in your report.

If you need help or advice, please feel free to ask. I'm here to help.

Have a great day my friends.

Monday, December 16, 2019

Independence Matters

Independence matters. When learning new materials, it's important to get an unvarnished view of the information. Too many "official" vendor-sponsored training sessions aren't at all training to competency - they're information sessions, at best, or marketing sessions, at worse.

Vendors generally don't want their employees and contractors to point out the problems with their tools. But as a practitioner, you'll want to know the limitations of that thing you're learning. You'll want to know what it can do, what it does well, and what it doesn't do - or does wrong.

Now, I understand that when people start showing you their resume, they've generally lost the argument. But, in this case, it's an important point of clarification. I do so to show you the value proposition of independent training providers like me. Having been trained and certified by California's POST for curriculum development and instruction means that I understand the law enforcement context. Yes, I was a practitioner who happened to be in police service. The training by POST takes that practitioner information and focuses it, refines it, towards creating and delivering valid learning events. Having an MEdID - a Masters of Education in Instructional Design, means that I've proven that I know how to design instructional programs that will achieve their instructional goals. The PhD in Education is just the icing on the cake, but proves that I know how to conduct research and report my results - an important skillset for evaluating the work of others.

Unlike many of the courses on offer in this space, the courses that I've designed and deliver for the forensic sciences are "competency based." I'm a member of the International Association for Continuing Education and Training (IACET) and follow their internationally recognized Standard for Competency Based Learning.

According to the IACET's research, the Competency-Based Learning (CBL) standard helps organizations to:

This is why, when delivering "product training," I've always wrapped a complete curriculum around the product. I've never just taught FIVE, for example. I've introduced the discipline of forensic digital / multimedia analysis, using the platform (FIVE) to facilitate the work. This is what competency based learning looks like.

Yes, you need to know what buttons to push, and in what order, but you also need to know when it's not appropriate to use the tool and when, how, and why to report inclusive results. You'll need to know how to facilitate a "technical review" and package your results for an independent "peer review." You'll also need to know that there may be a better tool for a specific job - something a manufacturer's representative won't often share.

Align learning with critical organizational imperatives - I've studies the 2009 NAS report. I understand it's implications. The organization that the analyst serves is the Trier of Fact, not the organization that issues their pay. The courses that I've designed and deliver match not only with the US context, but also align with the standards produced in the UK, EU, India, Australia, and New Zealand (many other countries choose to align their national standards with one of these contexts).

Vendors generally don't want their employees and contractors to point out the problems with their tools. But as a practitioner, you'll want to know the limitations of that thing you're learning. You'll want to know what it can do, what it does well, and what it doesn't do - or does wrong.

Now, I understand that when people start showing you their resume, they've generally lost the argument. But, in this case, it's an important point of clarification. I do so to show you the value proposition of independent training providers like me. Having been trained and certified by California's POST for curriculum development and instruction means that I understand the law enforcement context. Yes, I was a practitioner who happened to be in police service. The training by POST takes that practitioner information and focuses it, refines it, towards creating and delivering valid learning events. Having an MEdID - a Masters of Education in Instructional Design, means that I've proven that I know how to design instructional programs that will achieve their instructional goals. The PhD in Education is just the icing on the cake, but proves that I know how to conduct research and report my results - an important skillset for evaluating the work of others.

Unlike many of the courses on offer in this space, the courses that I've designed and deliver for the forensic sciences are "competency based." I'm a member of the International Association for Continuing Education and Training (IACET) and follow their internationally recognized Standard for Competency Based Learning.

According to the IACET's research, the Competency-Based Learning (CBL) standard helps organizations to:

- Align learning with critical organizational imperatives.

- Allocate limited training dollars judiciously.

- Ensure learning sticks on the job.

- Provide learners with the tools needed to be agile and grow.

- Improve organizational outcomes, return on mission, and the bottom line.

This is why, when delivering "product training," I've always wrapped a complete curriculum around the product. I've never just taught FIVE, for example. I've introduced the discipline of forensic digital / multimedia analysis, using the platform (FIVE) to facilitate the work. This is what competency based learning looks like.

Yes, you need to know what buttons to push, and in what order, but you also need to know when it's not appropriate to use the tool and when, how, and why to report inclusive results. You'll need to know how to facilitate a "technical review" and package your results for an independent "peer review." You'll also need to know that there may be a better tool for a specific job - something a manufacturer's representative won't often share.

Align learning with critical organizational imperatives - I've studies the 2009 NAS report. I understand it's implications. The organization that the analyst serves is the Trier of Fact, not the organization that issues their pay. The courses that I've designed and deliver match not only with the US context, but also align with the standards produced in the UK, EU, India, Australia, and New Zealand (many other countries choose to align their national standards with one of these contexts).

Allocate limited training dollars judiciously - Whilst most training vendors continue to raise prices, I've dropped mine. I've even found ways to deliver the content on-line as micro learning, to get prices as low as possible. Plus, it helps your agency meet it's sustainability goals.

Ensure learning sticks on the job - Building courses for all learning styles helps to ensure that you'll remember, and apply, your new knowledge and skills.

Provide learners with the tools needed to be agile and grow - this is another reason for building on-line micro learning offerings. Learning can happen when and where you're available, and where and when you need it.

Improve organizational outcomes, return on mission, and the bottom line - competency based training events help you improve your processing speed, which translates to more cases worked per day / week / month. This is your agency's ROM and ROI. Getting the work done right the first time is your ROM and ROI.

I'd love to see you in a class soon. See for yourself what independence means for learning events. You can come to us in Henderson, NV. We've got seats available for our upcoming Introduction to forensic multimedia analysis with Amped FIVE sessions (link). You can join our on-line offering of that course (link), saving a ton of money doing so. Or, we can come to you.

Have a great day, my friends.

keywords: audio forensics, video forensics, image forensics, audio analysis, video analysis, image analysis, forensic video, forensic audio, forensic image, digital forensics, forensic science, amped five, axon five, training, forensic audio analysis, forensic video analysis, forensic image analysis, amped software, amped software training, amped five training, axon five training, amped authenticate, amped authenticate training

keywords: audio forensics, video forensics, image forensics, audio analysis, video analysis, image analysis, forensic video, forensic audio, forensic image, digital forensics, forensic science, amped five, axon five, training, forensic audio analysis, forensic video analysis, forensic image analysis, amped software, amped software training, amped five training, axon five training, amped authenticate, amped authenticate training

Friday, December 13, 2019

Techno Security & Digital Forensics Conference - San Diego 2020

It seems that the Techno Security conference's addition of a San Diego date was enough of a success that they've announced a conference for 2020. This is good news. I've been to the original Myrtle Beach conference several times. All of the big players in the business set up booths to showcase their latest. For practitioners, it's a good place to network and see what's new in terms of tech and technique.

It's good to see the group growing. Myrtle Beach as a bit of a pain to get to. Adding dates in Texas and California helps folks in those states get access and avoid the crazy travel policies imposed by their respective states. Those crazy travel policies are at the heart of my moving my courses on-line.

The sponsor list is a who's-who of the digital forensics industry. It contains everyone you'd expect, except one - LEVA. Why is LEVA, a 501(c)(3) non-profit, a Gold Sponsor? How is it that a non-profit public benefit corporation has about $10,000 to spend on sponsoring a commercial event?

As I write this, I am currently an Associate Member of LEVA. I'm an Associate Member because, in the view of LEVA's leadership, my retirement from police service disqualifies me from full membership. I've been a member of LEVA for as long as I've been aware of the organization, going back to the early 2000's. I've volunteered as an instructor at their conferences, believing in the mission of practitioners giving back to practitioners. But, this membership year (2019) will be my last. Clearly, LEVA is no longer a non-profit public benefit corporation. Their presence as a Gold Sponsor at this conference reveals their profiteering motive. They've officially announced themselves as a "training provider," as opposed to a charity that supports government service practitioners. Thus, I'm out. Let me explain my decision making on this in detail.

Consider what it will take if you choose to maintain the fiction that LEVA is a membership support / public benefit charity.

- Gold Sponsorship is $6300 for one event, or $6000 per event if the organization commits to multiple events. Let's just assume that they've come in at the single event rate.

- Rooms at the event location, a famous golf resort, are advertised at the early bird rate of $219 plus taxes and fees. Remember, the event is being held in California - one of the costliest places to hold such an event. Taxes in California are some of the highest in the country. Assuming only two LEVA representatives travel to work the event, and the duration of the event, lodging will likely run about a thousand per person.

- Add to that the "per diem" that will be paid to the workers.

- Add transportation to / from / at.

- Consider that, as a commercial entity, LEVA will likely have to give things away - food / drink / swag - to attract attention.

The grand total for all of this will exceed $10,000 if done on the cheap. What's the point of being a Gold Sponsor if one intends to do such a show on the cheap? I know that a two-person showing at a conference, whilst I was with Amped Software, ran between $15,000 and $25,000 per show. But, even at $10,000, what is the benefit to the public - the American taxpayer - by this non-profit public benefit organization's attendance at this commercial event? Hint: there is none.

If LEVA is a membership organization, then it would take an almost doubling of the membership - as a result of this one show - to break even on the event. We all know that's not going to happen.

If you need further convincing, let's revisit their organizational description from the sponsor's page. This is the information that LEVA provided to the host. This is the information LEVA wants attendees at the conference to have about LEVA.

Ignoring the obvious misspelling (we all do it sometimes), this statement is in conflict with the LEVA web site's description of the organization:

"LEVA is a non-profit corporation committed to improving the quality of video training and promoting the use of state-of-the-art, effective equipment in the law …"

First, what is a "global standard?" If you're thinking of an international standard for continuing education and training, the relevant "standards body" might be the International Association for Continuing Education and Training (the IACET). LEVA's programs are not accredited by the IACET. How do I know this? As someone who designs and delivers Competency Based Training, I'm an IACET member. I logged in and checked. LEVA is not listed. You don't have to be a member to check my claim. You can visit their page and see for yourself. Do they at least follow the relevant ANSI / IACET standard for the creation, delivery, and maintenance of Competency Based Training programs? You be the judge.

Is LEVA the "only organization in the world that provides court-recorgnized training in video forensics that leads to certification? Of course not. ANSI is a body that accredits certification programs. LEVA is not ANSI accredited. The International Certification Accreditation Council (ICAC) is another. ICAC follows the ISO/IEC 17024 Standards. LEVA is not accredited by ICAC either. Additionally, the presence of the IAI in the marketplace (providing both training events and a certification program) proves the lie of LEVA's marketing. LEVA is not the "only organization" providing training, it's one of many.

LEVA is not the only organization providing certification either. There' the previously mentioned IAI, as well as the ETA-i. Unlike the IAI and LEVA, the ETI-i's Audio-Video Forensic Analyst certification program (AVFA) is not only accredited, but also tracks with the ASTM's E2659 - Standard Practice for Certificate Programs.

Consider the many "certificates of training" that LEVA issued for my information sessions that I facilitated at it's conferences over the years. I was quite explicit in my describing my presence there, and what the session was - an information session. They weren't competency based training events. Yet, LEVA issued "certificates" for those sessions and many of the attendees considered themselves "trained." Section 4.2 of ASTM E2659 speaks to this problem.

Further to the point, Section 4.3 of ASTM E2659 notes "While certification eligibility criteria may specify a certain type or amount of education or training, the learning event(s) are not typically provided by the certifying body. Instead, the certifying body verifies education or training and experience obtained elsewhere through an application process and administers a standardized assessment of current proficiency or competency." The ETA-i meets this standard with it's AVFA certification program. So does the IAI. LEVA does not.

We'll ignore the "court-recognized" portion as completely meaningless. "The court" has "recognized" every copy of my CV ever submitted - if by "recognized" you mean "the Court" accepted its submission - as in, "yep, there it is." But, courts in the US do not "approve" certification or training programs. To include the "court-recognized" statement is just banal.

If you wish, dive deeper into ASTM E2659. Section 5.1 speaks to organizational structure. In 5.1.2.1, the document notes: "The certificate program’s purpose, scope, and intended outcomes are consistent with the stated mission and work of the certificate issuer." Clearly, that's not true in LEVA's case. Are they a non-profit public benefit corporation or are they a commercial training and certification provider? This is an important question for ethical reasons. As a non-profit public benefit organization, they're entitled to many tax breaks not available to commercial organizations. As a non-profit, working in the commercial sphere, they receive an unfair advantage when competing in the market by not having to pay federal taxes on their revenue. Didn't we all take an ethics class at some point? I understand Jim Rome's "if you're not cheating, you're not trying" statements as relates to the New England Patriots and other sports franchises. But this isn't sports. Given their sponsorship statement, LEVA should dissolve as a non-profit public benefit organization and reconstitute itself as a commercial training provider and certificate program.

The commercial nature of LEVA is reinforced by their first ever publicly released financial statement and it's tax filings. More than 80% of it's efforts are training related. It's officers have salaries. It's instructors are paid (not volunteers). Heck, almost 50% of it's expenses are for travel. On paper, LEVA looks like a subsidized travel club for select current / former government service employees.

Then there's the question of the profit. For a non-profit public benefit organization, it's profits are supposed to be rolled back into it's mission of benefiting the public. Nothing about the financial statement shows a benefit to the public. Also, the "Director Salaries" is a bit of a typo. Only one director gets paid. That $60,000 goes to one person. And, on LEVA's 2017 990 filing with the IRS, the director was paid $42,000. Given the growth in revenue from 2017, the 70% raise was likely justified in the minds of the Board of Directors. If you are wondering about the trend, you're out of luck. The IRS' web site only lists two years of returns (2017 & 2018) - meaning it's likely none were filed for years previous.

Another issue with LEVA's status as a non-profit public benefit organization is that of giving back to the community. In all of the publicly available 990 filings, the forms show zero dollars given in grants or similar payouts.

|

| 2017 |

|

| 2018 |

No monies given as grants yet, their current filing shows quite a bit in savings.

|

| 2018 Cash on Hand |

Clearly, LEVA will not miss my $75 annual dues payment with that kind of cash laying around.

If you're still here, let's move away from the financial and go a little farther down in Section 5. In 5.2.1.1 (11), LEVA necessarily and spectacularly fails in terms of (11) - nondiscrimination. LEVA, by it's very nature, discriminates in it's membership structure. It discriminates against anyone who is not not a current government service employee or a "friend of LEVA." This active discrimination (arbitrary classes of members and the denial of membership to those not deemed worthy) would disqualify it from accreditation by any body that uses ASTM E2659 as it's guide.

All of this informs my decision to not renew my LEVA membership. I am personally very charitable. But, I have no intention of giving money to a commercial enterprise that competes with my training offerings. Besides, given their balance sheet, they certainly don't need my money. It's not personal. It's a proper business decision.

Thursday, December 12, 2019

Introduction to Forensic Multimedia Analysis with Amped FIVE - seats available

Seats Available!

Did your agency purchase Amped FIVE / Axon Five, but skipped on the training? Learn the whole workflow - from unplayable file, to clarified and restored video that plays back in court.

Course Date: Jan 20-24, 2020,

Course location: Henderson, NV, USA.

Click here to sign up for Introduction to Forensic Multimedia Analysis with Amped FIVE.

keywords: audio forensics, video forensics, image forensics, audio analysis, video analysis, image analysis, forensic video, forensic audio, forensic image, digital forensics, forensic science, amped five, axon five, training, forensic audio analysis, forensic video analysis, forensic image analysis, amped software, amped software training, amped five training, axon five training, amped authenticate, amped authenticate training

Tuesday, December 10, 2019

Alternative Explanations?

I've sat through a few sessions on the Federal Rules of Evidence. Rarely does the presenter dive deep into the Rules, providing only an overview of the relevant rules for digital / multimedia forensic analysis.

A typical slide will look like the one below:

Those who have been admitted to trial as an Expert Witness should be familiar with (a) - (d). But, what about the Advisory Committee's Notes?

Deep in the notes section, you'll find this preamble:

"Daubert set forth a non-exclusive checklist for trial courts to use in assessing the reliability of scientific expert testimony. The specific factors explicated by the Daubert Court are (1) whether the expert's technique or theory can be or has been tested—that is, whether the expert's theory can be challenged in some objective sense, or whether it is instead simply a subjective, conclusory approach that cannot reasonably be assessed for reliability; (2) whether the technique or theory has been subject to peer review and publication; (3) the known or potential rate of error of the technique or theory when applied; (4) the existence and maintenance of standards and controls; and (5) whether the technique or theory has been generally accepted in the scientific community. The Court in Kumho held that these factors might also be applicable in assessing the reliability of nonscientific expert testimony, depending upon “the particular circumstances of the particular case at issue.” 119 S.Ct. at 1175.

No attempt has been made to “codify” these specific factors. Daubert itself emphasized that the factors were neither exclusive nor dispositive. Other cases have recognized that not all of the specific Daubert factors can apply to every type of expert testimony. In addition to Kumho, 119 S.Ct. at 1175, see Tyus v. Urban Search Management, 102 F.3d 256 (7th Cir. 1996) (noting that the factors mentioned by the Court in Daubert do not neatly apply to expert testimony from a sociologist). See also Kannankeril v. Terminix Int'l, Inc., 128 F.3d 802, 809 (3d Cir. 1997) (holding that lack of peer review or publication was not dispositive where the expert's opinion was supported by “widely accepted scientific knowledge”). The standards set forth in the amendment are broad enough to require consideration of any or all of the specific Daubert factors where appropriate."

Important takeaways from this section:

A typical slide will look like the one below:

Those who have been admitted to trial as an Expert Witness should be familiar with (a) - (d). But, what about the Advisory Committee's Notes?

Deep in the notes section, you'll find this preamble:

"Daubert set forth a non-exclusive checklist for trial courts to use in assessing the reliability of scientific expert testimony. The specific factors explicated by the Daubert Court are (1) whether the expert's technique or theory can be or has been tested—that is, whether the expert's theory can be challenged in some objective sense, or whether it is instead simply a subjective, conclusory approach that cannot reasonably be assessed for reliability; (2) whether the technique or theory has been subject to peer review and publication; (3) the known or potential rate of error of the technique or theory when applied; (4) the existence and maintenance of standards and controls; and (5) whether the technique or theory has been generally accepted in the scientific community. The Court in Kumho held that these factors might also be applicable in assessing the reliability of nonscientific expert testimony, depending upon “the particular circumstances of the particular case at issue.” 119 S.Ct. at 1175.

No attempt has been made to “codify” these specific factors. Daubert itself emphasized that the factors were neither exclusive nor dispositive. Other cases have recognized that not all of the specific Daubert factors can apply to every type of expert testimony. In addition to Kumho, 119 S.Ct. at 1175, see Tyus v. Urban Search Management, 102 F.3d 256 (7th Cir. 1996) (noting that the factors mentioned by the Court in Daubert do not neatly apply to expert testimony from a sociologist). See also Kannankeril v. Terminix Int'l, Inc., 128 F.3d 802, 809 (3d Cir. 1997) (holding that lack of peer review or publication was not dispositive where the expert's opinion was supported by “widely accepted scientific knowledge”). The standards set forth in the amendment are broad enough to require consideration of any or all of the specific Daubert factors where appropriate."

Important takeaways from this section:

- (1) whether the expert's technique or theory can be or has been tested—that is, whether the expert's theory can be challenged in some objective sense, or whether it is instead simply a subjective, conclusory approach that cannot reasonably be assessed for reliability;

- (2) whether the technique or theory has been subject to peer review and publication;

- (3) the known or potential rate of error of the technique or theory when applied;

- (4) the existence and maintenance of standards and controls; and

- (5) whether the technique or theory has been generally accepted in the scientific community.

As to point 1, the key words are "objective" and "reliability." Part of this relates to a point further on in the notes - did you adequately account for alternative theories or explanations? More about this later in the article.

To point 2, many analysts confuse "peer review" and "technical review." When you share your report and results in-house, and a coworker checks your work, this is not "peer review" This is a "technical review." A "peer review" happens when you or your agency sends the work out to a neutral third party to review the work.See ASTM E2196 for the specific definitions of these terms. If your case requires "peer review," we're here to help. We can either review your case and work directly, or facilitate a blind review akin to the publishing review model. Let me know how we can help.

To point 3, there are known and potential error rates for disciplines like photographic comparison and photogrammetry? Do you know what they are and how to find them? We do. Let me know how we can help.

To point 4, either an agency has their own SOPs or they follow the consensus standards found in places like the ASTM. If your work does not follow your own agency's SOPs, or the relevant standards, that's a problem.

To point 5, many consider "the scientific community" to be limited to certain trade associations. Take LEVA, for example. It's a trade group of mostly government service employees at the US/Canada state and local level. Is "the scientific community" those 300 or so LEVA members, mostly in North American law enforcement? Of course not. Is "the scientific community" inclusive of LEVA members and those who have attended a LEVA training session (but are not LEVA members)? Of course not. The best definition "the scientific community" that I've found comes from Scientomony. Beyond the obvious disagreement between how "the scientific community" is portrayed at LEVA conferences vs. the actual study of the scientific mosaic and epistemic agents, a "scientific community" should at least act in the world of science and not rhetoric - proving something as opposed to declaring something.

Further down the page, we find this:

"Courts both before and after Daubert have found other factors relevant in determining whether expert testimony is sufficiently reliable to be considered by the trier of fact. These factors include:

- (3) Whether the expert has adequately accounted for obvious alternative explanations. See Claar v. Burlington N.R.R., 29 F.3d 499 (9th Cir. 1994) (testimony excluded where the expert failed to consider other obvious causes for the plaintiff's condition). Compare Ambrosini v. Labarraque, 101 F.3d 129 (D.C.Cir. 1996) (the possibility of some uneliminated causes presents a question of weight, so long as the most obvious causes have been considered and reasonably ruled out by the expert)."